By Nat Ives

The July advertiser boycott against Facebook Inc. over the way

it handles unwelcome content generated some results, at least in

the eyes of organizers, and a flood of headlines.

But parts of the ad industry also are taking a wider look at the

other players in social media. Although there are no signs of new

boycotts brewing, the scrutiny could affect how social media

companies handle hate speech, misinformation and other content.

IPG Mediabrands, a media planning-and-buying group within

ad-agency giant Interpublic Group of Cos., has begun what it says

will be a quarterly report comparing top social media platforms'

content policies and practices.

The report is meant to help marketers determine which platforms

fit their values and to avoid focusing only on the platform in the

hot seat at the moment, said Elijah Harris, global head of social

at Reprise, an agency that is part of Mediabrands.

"It's important to have an objective assessment and not simply

allow the news cycle to dictate how we vet these partners," Mr.

Harris said.

Some marketers have been making their own painstaking,

case-by-case evaluations of the platforms.

"After a 30-day re-evaluation of our national social media

advertising on all social platforms, we are returning to several

social media outlets, including YouTube and Pinterest," a Ford

Motor Co. spokesman said. "We are still evaluating other partners,

including Facebook, Instagram, Snapchat, Twitter and TikTok."

Coca-Cola Co. has resumed advertising on platforms including

YouTube, part of Alphabet Inc.'s Google, and LinkedIn, part of

Microsoft Corp., after a pause, according to the company, but

hasn't returned to platforms such as Facebook, Instagram and

Twitter Inc.

"As we continue to assess each platform, we can confirm that our

re-entry to social media will be a phased approach by channel," a

spokeswoman for Coca-Cola said.

Ranking the Platforms

Mediabrands' first quarterly report, which came out Thursday,

ranked YouTube No. 1 for what it calls media responsibility, giving

it the highest cumulative score across 10 areas such as the

handling of hate speech, measures against misinformation and

transparency for advertisers. Out of nine platforms evaluated,

Facebook ranked fifth and TikTok came in last.

YouTube responded to a well-publicized brand-safety incident in

2017, when some brands pulled back after they found their ads

running alongside extremist content on the site. YouTube restricted

ads to a smaller pool of larger channels, for example, and made it

easier for marketers to keep their ads away from certain

content.

"YouTube learned the hard way and has actually leaned into the

changes that needed to be made," said Mr. Harris.

Mediabrands said TikTok fell below the average on areas such as

providing controls for advertisers and transparency on ad

placements. TikTok was unable to fully answer some questions around

diversity, equity and inclusion, according to Mediabrands.

TikTok, a unit of ByteDance Ltd., said it takes steps to ensure

a positive experience for users and brands. The company is opening

what it calls transparency and accountability centers where

observers can watch its content moderators at work, and releases

regular reports on its enforcement of its policies.

"Promoting a safe environment for everyone on TikTok is our top

priority, " Blake Chandlee, vice president of global business

solutions at TikTok, said in a statement.

Facebook said this week it is improving the detection and

removal of hate speech from its namesake platform as well as from

Instagram. In the second quarter of the year, it detected 95% of

the hate speech it removed from Facebook before someone else

reported it, up from 89% in the first quarter, the company said. It

also has committed to other actions, including hiring a civil

rights leader at the vice president level.

"We've invested billions of dollars to keep hate off of our

platform, and we have a clear plan of action with the Global

Alliance for Responsible Media and the industry to continue this

fight," a spokeswoman said.

The Global Alliance for Responsible Media is a group of

advertisers, media companies, technology companies and others

focused on improving safety standards online. Facebook's

commitments to GARM include adopting proposals regarding the

definition of hate speech and the performing of two outside audits

of its transparency reports and ad policies.

Twitter said its policies protect advertisers as well as users.

"We are proud of what we have accomplished by developing brand-safe

policies and platform capabilities, and as always, are committed to

continuing this work," a spokeswoman said.

Snap Inc., parent of Snapchat, said its service avoids

amplifying misinformation or other unwelcome content, partly

because it offers a curated feed of content and lacks an open news

feed.

Write to Nat Ives at nat.ives@wsj.com

(END) Dow Jones Newswires

August 13, 2020 06:14 ET (10:14 GMT)

Copyright (c) 2020 Dow Jones & Company, Inc.

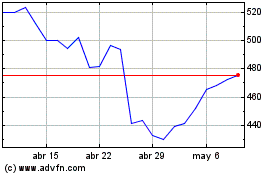

Meta Platforms (NASDAQ:META)

Gráfica de Acción Histórica

De Mar 2024 a Abr 2024

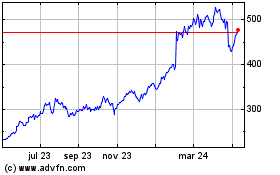

Meta Platforms (NASDAQ:META)

Gráfica de Acción Histórica

De Abr 2023 a Abr 2024